Do people actually want this?

Like, I know the megacorps that control our lives do (since it’s a cheap way of adding value to their products), but what about actual users? I think many see it as a novelty and a toy rather than a productivity tool. Especially when public awareness of “hallucinations” and the plight faced by artists rises.

Kinda feels like the whole “voice controlled assistants” bubble that happened a while ago. Sure they are relatively commonplace nowadays, but nowhere near as universal as people thought they would be.

Do people actually want this?

Nope. Just like those stupid hard coded buttons on my Roku remote that I have never used.

Do people actually want this?

Absolutely not. But this is the new standard now.

The new Micro$oft standard, which, as always, is bullshit and should be avoided and ignored at all times.

Yes. The Microsoft standard. Like the Windows key on all keyboards nowadays.

Not a single soul wants this. They just want to use every foul trick to get you to use copilot (by accident even) just like they do with bing and their other garbage.

Another key to bind to something else? Hell yeah

Nope, just a new logo on an existing key.

:(

Current LLMs are manifestly different from Cortana (🤢) because they are actually somewhat intelligent. Microsoft’s copilot can do web search and perform basic tasks on the computer, and because of their exclusive contract with OpenAI they’re gonna have access to more advanced versions of GPT which will be able to do more high level control and automation on the desktop. It will 100% be useful for users to have this available, and I expect even Linux desktops will eventually add local LLM support (once consumer compute and the tech matures). It is not just glorified auto complete, it is actually fairly correlated with outputs of real human language cognition.

The main issue for me is that they get all the data you input and mine it for better models without your explicit consent. This isn’t an area where open source can catch up without significant capital in favor of it, so we have to hope Meta, Mistral and government funded projects give us what we need to have a competitor.

Sure, all that may be true but it doesn’t answer my original concern: Is this something that people want as a core feature of their OS? My comments weren’t that “oh, this is only as technically sophisticated as voice assistants”, it was more “voice assistants never really took off as much as people thought they would”. I may be cynical and grumpy, but to me it feels like these companies are failing to read the market.

I’m reminded of a presentation that I saw where they were showing off fancy AI technology. Basically, if you were in a call 1 to 1 call with someone and had to leave to answer the doorbell or something, the other person could keep speaking and an AI would summarise what they said when they got back.

It felt so out of touch with what people would actually want to do in that situation.

I hope the LLM bubble pops this year. The degree of overinvestment by megacorps is staggering.

I suppose having worked with LLMs a whole bunch over the past year I have a better sense of what I meant by “automate high level tasks”.

I’m talking about an assistant where, let’s say you need to edit a podcast video to add graphics and cut out dead space or mistakes that you corrected in the recording. You could tell the assistant to do that and it would open the video in Adobe Premiere pro, do the necessary tasks, then ask you to review it to check if it made mistakes.

Or if you had an issue with a particular device, e.g. your display, the assistant would research the issue and perform the necessary steps to troubleshoot and fix the issue.

These are currently hypothetical scenarios, but current GPT4 can already perform some of these tasks, and specifically training it to be a desktop assistant and to do more agentic tasks will make this a reality in a few years.

It’s additionally already useful for reading and editing long documents and will only get better on this end. You can already use an LLM to query your documents and give you summaries or use them as instructions/research to aid in performing a task.

I guess my understanding of an LLM must be way off base.

I had thought that asking an LLM to edit a video was simply out of scope. Like asking your self driving car to wash the dishes.

A year ago local LLM was just not there, but the stuff you can run now with 8gb vram is pretty amazing, if not quite as good yet as GPT 4. Honestly even if it stops right where it is, it’s still powerful enough to be a foundation for a more accessible and efficient way to interface with computers.

This is the dumbest fucking thing I’ve ever heard of. I’m not buying any keyboard or laptop that has this key. There’s enough Linux-first vendors these days that it’s easy to avoid (Framework, System76, Tuxedo, etc). It’s time to be done with Lenovo and Dell.

Same, I think I might give the System76 Darter a try when I eventually have to replace my Xps 9370. It’s bad enough that my computer comes with a windows logo on the super-key and often windows preinstalled. Shipping with a non-ANSI/ISO layout is a no-buy for me.

Unfortunately, the “linux-first” vendors do not offer better deals than their competition.

It depends on how and what you’re measuring. A lot of Linux first, like system 76 and purism, do so e serious work on the firmware and boot systems of their systems. Which for some is a huge value add compared.

They absolutely do, when one considers the negative value of Windows.

Like with the Windows key, this won’t be an option.

Ah yes, just like you had that option with the windows key right?

I don’t care as long as the placement is ok and I can map it to something useful. I’m a GNOME user so the Windows/Super key gets a lot of use. It’s nice to have. A new key that I use for all my custom shortcuts would actually be kind of nice. Who cares that the default key caps are a Windows icon and this Copilot thing? Change the key caps and they are just keys.

This is Clippy v2.0 and I’m sure it will be just as helpful.

They’ve learned from their mistakes, and concluded that Clippy failed because there was no Clippy key.

Removed by mod

Oh “great”, more crap between Ctrl and Alt.

[Grumpy grandpa] In my times, the space row only had five keys! And we did more than those youngsters do with eight, now nine keys!

In my time it was also nine. Back to the roots. ;->

Why doesn’t my keyboard have a thumbs-up key?!

Nice

From the picture, it’s just the context menu key with a new key cap.

That’s still a new key for some people. My laptop doesn’t have a context key, for example.

Aaaaah. I really, really wanted to complain about the excessive amount of keys.

(My comment above is partially a joke - don’t take it too seriously. Even if a new key was added it would be a bit more clutter, but not that big of a deal.)

So you can pressed accidentally activating the fucking AI and make the numbers go up so Microsoft can then go and say to investors look millions are using my AI. So annoying.

That’s funny, because getting an ad for Copilot inside my startmenu was actually what made me go back to Linux after 10 years.

This tracks.

Why? Win+C launches Copilot already, if you want to use it. It’s simple enough currently, why change it? This will just make everything worse.

Why? Investment hype

Bingo

Awesome Keyboard with AI Support *

* On supported Operating Systems **

** With separate subscription.

I can’t wait to no longer find a keyboard without this key.

Welcome to the custom mechanical keyboard scene.

I’m pretty sure you’ll be able to find keyboards with a different icon that the ugly copilot, and then you can map it to whatever you want.

You can always use those keyboards from the 2000’s

because most people are unaware of keybindings and when they inevitable tap on the new dedicated key they’ll probably be shown a subscription screen for Copilot Premium or whatever they call it.

IMO it’s a very disgusting and intrusive way of fishing subscriptions to the AI thing they’ve invested so much money on.

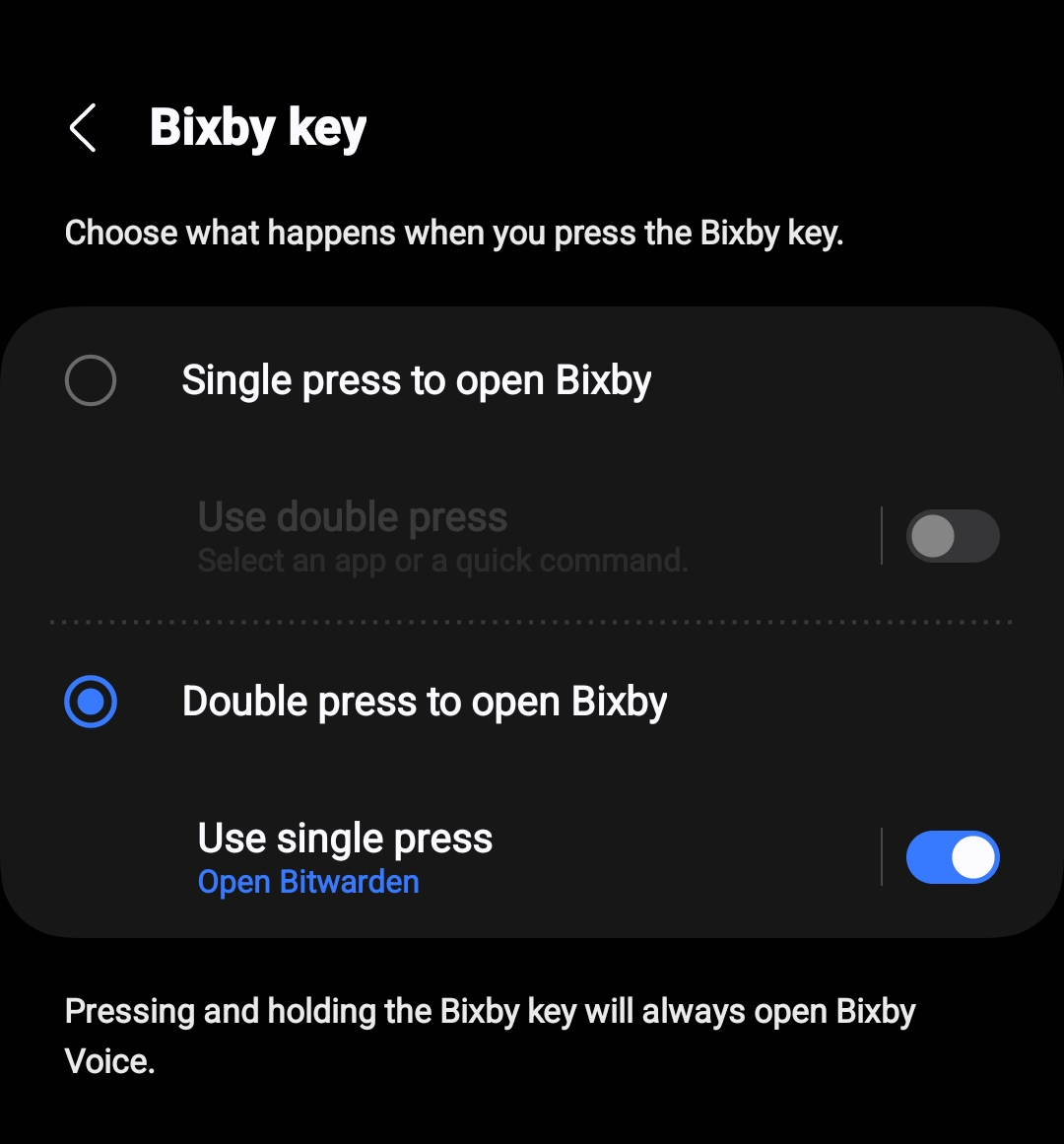

Like the shitty bixby button on phones.

In the five years of owning this phone, I have never once pressed that button on purpose. I press it on accident at least once a week.

5 years… do you have the S9? cause im exactly the same, never intentionally used it. ever.

I have the s10+ and it’s actually useful, as you can remap the double click on that button to open any app you like. But yeah single click, never happened intentionally.

EDIT: F yeah, I just checked the settings and you can decide if you want bixby activation on single or double-click. Now I’ve set bixby to double click and on single-click it opens my password manager. If you don’t select anything, it will do nothing on a single click.

The setting is under “Advanced Features” -> “Bixby Key” for me.

This requires logging in to bixby for me.

lol yep, S9.

deleted by creator

Using it til it dies. Love this phone.

Can’t wait to see this gone in the next 3 years.

"Oh yeah I remember these keyboards! Good times, that was before the

before the what, op?

BEFORE THE WHAT??

sweats, knowing a time-traveler in our midst refused to tell us about the coming copilocalypse

deleted by creator

Alas, often, no. Just another logo we can’t be rid of.

In some cases, the Copilot key will replace the Menu key or the right Control key, a Microsoft spokesperson told CNBC in an email. Some larger computers will have enough room for both the Copilot key and the right Control key, the spokesperson said.

That’s impressively awful

I guess I can just buy a sticker and remap the AI key to do ctrl instead lol

Hmm, maybe I can use it for the Compose key instead or Right Alt…

Compose has always been Capslock for me

as an i3wm user, I approve 😁

Lol, the first thing that went through my mind was “what feature can I add to i3 with this key?”

unfortunately it would probably just replace the context menu key. which I’ve already set it to keyboard layout switch. 😁 it’s the best keybind I have. way faster than mod+space or alt+shift 😅

Linux laptops usually come with a super key.

What the fuck is a labtop?

Its the same thing as a laptop. Labtops are laptops used in a lab. (Or maybe I just made a typo, who knows)

Really milking that fad before they inevitably push anything useful behind a monthly paywall.

As long as the ability to manually turn off secureboot and remove the OS isn’t locked behind a subscription…

It’s already behind a paywall. You can’t access ChatGPT-4 without paying.

and yet they are still loosing money by running ChayGPT 3.5 for free. I guess that in the future they’ll switch to a local small model in the hardware that is capable enough.

I think it’s like anything on the modern web, they’ll lose money until they reach a critical mass of users who get accustomed to using ChatGPT in their day-to-day life, and then they’ll kill the free tier.

Except their free tier is still around for everything that they started as free. Outlook, bing, Visual Studio Code, even office is free for students and teachers.

They’ll always keep the low tier free to get people hooked and charge businesses whatever.

Microsoft has free tier Office tools because they’re data brokers now. TMK they didn’t always have free Outlook, it was bundled in Office, which cost money. I don’t see ChatGPT remaining free forever, it costs too much to run. I could be wrong though, depending on how much valuable data they can scrape from it.

Yeah they didn’t gave a free Office, Outlook or Visual studio. Now they do and there is no sign of them stopping it. Bing is expensive and they aren’t stopping it.

Chatgpt is MS’s first real chance of dethroning Google search. They’re going to keep a free tier forever.

I am getting flashbacks to the multimedia keyboards on yesteryear:

https://deskthority.net/wiki/Multimedia_keyboard

Thanks MS, but no thanks, I don’t need it.

I love these, it has actual useful keys

I will admit that the volume wheel was awesome

For real though, I loved those. That wireless Logitech one with the volume dial lasted me a decade.

My mom had one, I absolutely loved using that thing when I did

yeah, the media controls are actually useful.

this kind of shit is what gives AI a bad rep

no one needs this

almost no one wants it

and they’ll kill it in a couple of years like they did it with Cortana

how the fuck can they just decide this

Probably through licensing agreements with PC retailers.

But you can also just decide not to buy them.

can i decide to buy a keyboard without the windows key today?

There’s always the IBM Model M or, if you prefer USB, there are remakes with it.

Wow it’s yuuge

sure, any Apple keyboard

Umm, it’s just a keycap. You can map the key to whatever you want.

agreed, however it defeats the point that its going to be optional if they really decide to do it.

Microsoft is a monopoly. Stallman was right, as usual in software

stallman is still right

Please don’t.

I have nothing against the people that are working on AI and appreciate the work they do. However every time I see an article about a company using AI like this I just get the vibe that it’s a bunch of middle aged men trying desperately to make things like the “future” they saw when they were a kid. I’ve seen amazing implementations of AI in a lot of different ways but I’m so sick of dumb ideas like this because some guy that used to watch Star Trek as a kid wants to feel like they live in the future while piggybacking on someone else’s work. It’s like the painted tunnel in cartoons where it looks like a real tunnel but in reality it’s just a very convincing lie. And that’s all that it is. Complexity does not mean sophistication when it comes to AI and never has and to treat it as such is just a forceful way to make your ideas come true without putting in the real effort.

Sorry, I had to get that out. Also I have nothing against Star Trek and I used to watch it as a kid because my parents watched it all the time.

Complexity does not mean sophistication when it comes to AI and never has and to treat it as such is just a forceful way to make your ideas come true without putting in the real effort.

It’s a bit off-topic, but what I really want is a language model that assigns semantic values to the tokens, and handles those values instead of directly working with the tokens themselves. That would be probably far less complex than current state-of-art LLMs, but way more sophisticated, and require far less data for “training”.

I’m not sure I understand. Do you mean hearing codewords triggering actions as opposed to trying to understand the users intent through language? Or is are there a few more layers to this whole thing than my moderate nerd cred will allow me to understand?

Not quite. I’m focusing on chatbots like Bard, ChatGPT and the likes, and their technology (LLM, or large language model).

At the core those LLMs work like this: they pick words, split them into “tokens”, and then perform a few operations on those tokens, across multiple layers. But at the end of the day they still work with the words themselves, not with the meaning being encoded by those words.

What I want is an LLM that assigns multiple meanings for those words, and performs the operations above on the meaning itself. In other words the LLM would actually understand you, not just chain words.

Semantic embeddings are a thing. LLMs “work with tokens” but they associate them with semantic models internally. You can externalize it via semantic embeddings so that the same semantic models can be shared between LLMs.

The source that I’ve linked mentions semantic embedding; so does further literature on the internet. However, the operations are still being performed with the vectors resulting from the tokens themselves, with said embedding playing a secondary role.

This is evident for example through excerpts like

The token embeddings map a token ID to a fixed-size vector with some semantic meaning of the tokens. These brings some interesting properties: similar tokens will have a similar embedding (in other words, calculating the cosine similarity between two embeddings will give us a good idea of how similar the tokens are).

Emphasis mine. A similar conclusion (that the LLM is still handling the tokens, not their meaning) can be reached by analysing the hallucinations that your typical LLM bot outputs, and asking why that hallu is there.

What I’m proposing is deeper than that. It’s to use the input tokens (i.e. morphemes) only to retrieve the sememes (units of meaning; further info here) that they’re conveying, then discard the tokens themselves, and perform the operations solely on the sememes. Then for the output you translate the sememes obtained by the transformer into morphemes=tokens again.

I believe that this would have two big benefits:

- The amount of data necessary to “train” the LLM will decrease. Perhaps by orders of magnitude.

- A major type of hallucination will go away: self-contradiction (for example: states that A exists, then that A doesn’t exist).

And it might be an additional layer, but the whole approach is considerably simpler than what’s being done currently - pretending that the tokens themselves have some intrinsic value, then playing whack-a-mole with situations where the token and the contextually assigned value (by the human using the LLM) differ.

[This could even go deeper, handling a pragmatic layer beyond the tokens/morphemes and the units of meaning/sememes. It would be closer to what @njordomir@lemmy.world understood from my other comment, as it would then deal with the intent of the utterance.]