Need to let loose a primal scream without collecting footnotes first? Have a sneer percolating in your system but not enough time/energy to make a whole post about it? Go forth and be mid: Welcome to the Stubsack, your first port of call for learning fresh Awful you’ll near-instantly regret.

Any awful.systems sub may be subsneered in this subthread, techtakes or no.

If your sneer seems higher quality than you thought, feel free to cut’n’paste it into its own post — there’s no quota for posting and the bar really isn’t that high.

The post Xitter web has spawned soo many “esoteric” right wing freaks, but there’s no appropriate sneer-space for them. I’m talking redscare-ish, reality challenged “culture critics” who write about everything but understand nothing. I’m talking about reply-guys who make the same 6 tweets about the same 3 subjects. They’re inescapable at this point, yet I don’t see them mocked (as much as they should be)

Like, there was one dude a while back who insisted that women couldn’t be surgeons because they didn’t believe in the moon or in stars? I think each and every one of these guys is uniquely fucked up and if I can’t escape them, I would love to sneer at them.

(Semi-obligatory thanks to @dgerard for starting this.)

Neo-Nazi nutcase having a normal one.

It’s so great that this isn’t falsifiable in the sense that doomers can keep saying, well “once the model is epsilon smarter, then you’ll be sorry!”, but back in the real world: the model has been downloaded 10 million times at this point. Somehow, the diamanoid bacteria has not killed us all yet. So yes, we have found out the Yud was wrong. The basilisk is haunting my enemies, and she never misses.

Bonus sneer: “we are going to find out if Yud was right” Hey fuckhead, he suggested nuking data centers to prevent models better than GPT4 from spreading. R1 is better than GPT4, and it doesn’t require a data center to run so if we had acted on Yud’s geopolitical plans for nuclear holocaust, billions would have been for incinerated for absolutely NO REASON. How do you not look at this shit and go, yeah maybe don’t listen to this bozo? I’ve been wrong before, but god damn, dawg, I’ve never been starvingInRadioactiveCratersWrong.

It’s wild that Yudkowsky saw a binary choice of “nuclear holocaust” and “superintelligence” and chose “nuclear holocaust” in the first place.

Like, even if I believed in FOOM, I’ll take my chances with the stupid sexy basilisk 🐍 over radiation burns and it’s not even fucking close.

I don’t want to say with absolute confidence that there’s no scenario I can imagine to which a nuclear apocalypse would be preferable (the real kind, not the Fallout kind). But I have yet to hear one.

The upcoming firestorms of climate change, while patrolling the Mohave desert almost make you wish for nuclear winter. Ow wait not like fallout you said

nuclear winter is not a thing that can possibly happen, from what i understand

sagan et al overstated amount of soot put in upper atmosphere over 10x, for no particular reason other than trying to make a point

notice how no one talked about it after desert storm? oilfield fires provided negative evidence

They still do nuclear war climate modelling. Its still bad.

Another dream shattered. Not sure if the oilfield fires were big enough. Volcanoes can cause some cooling right? pokes old yeller

yellowstone caldera erupting during Trump II would be fitting somehow

We’ve had recent eruptions in all be big categories, so we’re not due another one for a while and trying to cheat by setting one off early won’t allow sufficient pressure for a proper bang.

Not that I want to discourage you, but don’t be sad if you try for a year without summer and get a couple of weeks without flights instead.

The advanced sinophobia where the Chinese are so much better at everything than the west that even when they make better and cheaper bullshit machines than the Americans do and hand them out for free, it has apocalyptic consequences.

you can get banned on facebook now for linking to distrowatch https://www.tomshardware.com/software/linux/facebook-flags-linux-topics-as-cybersecurity-threats-posts-and-users-being-blocked and from distrowatch https://distrowatch.com/weekly.php?issue=20250127#sitenews

but it’s not as bad as you think, it’s slightly worse. it’s not only distrowatch and linux groups got banned too

gives a hell of a kicker to the numbers I found

I screenshot this the other day and forgot to post it. Well, enjoy.]

i wonder which endocrine systems are disrupted by not having your head sufficiently stuffed into a toilet before being old enough to type words into nazitter dot com

Screenshot of an insta post of a screenshot of a tweet

Tweet:

I can’t believe ChatGPT lost its job to AI

In the process of looking for ways to link up with homeschool parents that aren’t doing it for culty reasons, I accidentally discovered the existence of a small but active subreddit for “progressive monarchists”. It’s titled r/progressivemonarchists, because their imagination in naming conventions only slightly outatrips their imagination for forms of government. Given how our usual sneer fodder overlaps with nrx I figured there are others here who I can inflict this headache on.

Quality shitpost, I could imagine some thirteen year old actually believing this.

Yea, no fascism whatsoever took place in the Netherlands, Denmark, Norway, Luxembourg, or Belgium during WW2, in which they were all very successfully avoiding being occupied by fascists.

Extra points to Spain who already avoided succumbing to their own homegrown brand of fascism before the Nazi German invasion of Poland, and where they avoided having fascists in power all the way until the 1970s. There’s a book I quite like about the war where that happened called Homage to Catalonia. I wonder if Orwell ever read it.

Missing from the list is Italy, which is no longer a constitutional monarchy, but used to be until 1946, which is why they were so good at avoiding fascism they even named it.

This might take the cake for the dumbest take I’ve seen from George Orwell and not for a lack of competition.

Oh yeah Britain didn’t become fascist, they just imposed brutal imperialist exploitation on colonies in Asia and Africa lol. It’s not fascism if you export it!

Fascism really is “we have imperialism at home”

i would love to read someone more familiar with historical fascism and imperialism who is able to articulate the link between the two. like, an analysis of imperialism as the construction and maintenance of borders across which exploitation is enforced and economic value is extracted – paired with the observation of fascism as the attempts to construct borders “across peoples” from within the imperial core

let’s also not forget these very liberal and not at all nazi-collaborating kingdoms of Romania, Bulgaria, Hungary, Thailand and Japan. honorable mentions to Cambodia that very successfully avoided Pol Pot and Iran that very successfully avoided islamic revolution

is it a real quote from Orwell or just something someone made up?

seems to be a real quote https://en.wikipedia.org/wiki/Monarchism#Liberty

Yeah then it is a shitty take.

A really hot take for sure. Apparently Orwell did at least say this, at least according to Wikipedia (I was doubtful initially), which is weird, but I also feel that Orwell is often misrepresented and we are probably missing context. For one, Orwell was a socialist and yet is somehow presented as a hero by Raeganists and the such. I suspect there might be context missing.

deleted by creator

(Reposting from the last thread)

Days since last open source issue tracker pollution by annoying nerds: zero

My investigation tracked to you [Outlier.ai] as the source of problems - where your instructional videos are tricking people into creating those issues to - apparently train your AI.

I couldn’t locate these particular instructional videos, but from what I can gather outlier.ai farms out various “tasks” to internet gig workers as part of some sort of AI training scheme.

Bonus terribleness: one of the tasks a few months back was apparently to wear a head mounted camera “device” to record ones every waking moment

P.S. sorry for the linkedin link behind the mastodon link, but shared suffering and all that. I had to read “Uber for AI code data” so now you do too.

I had to read “Uber for AI code data” so now you do too.

Wow, what a fractal of cursed meaning. I don’t even understand what it really means, but it feels like understanding it any further would cause considerable psychic damage.

Well I can’t translate it but if you search for it… holy smokes are search engines amusingly bad at indexing federated content.

That random other Lemmy instance is actually doing the right thing here since it includes the right link rel canonical, so I guess Google just hasn’t caught up yet or something. I have no idea why Google chopped off the last byte of the IP address.

Live: Chinese AI bot DeepSeek sparks US market turmoil, wiping $500bn off major tech firm

Shares for leading US chip-maker Nvidia dropped more than 15% after the emergence of DeepSeek, a low-cost Chinese AI bot.

https://www.bbc.com/news/live/cjr85l2e4l4t

lmao

Folks around here told me AI wasn’t dangerous 😰 ; fellas I just witnessed a rogue Chinese AI do 1 trillion dollars of damage to the US stock market 😭 /s

is that Link??

Is it too early to hope that this is the beginning of the end of the bubble?

Also, does someone know why broadcom was also hit so hard? Is it because they make various networking-related chips used in datacenter infrastructure?

When hedge funds decide to flip the switch on something the reaction never looks rational. Meta was green today ffs.

Getting pretty tired of new coworker spruiking copilot all the time, please send me your sympathy and energy so I can weather this trial

I have added your coworker to the list of people I will imagine being tortured in the future. Im no robotgod but it is the best I can do.

that word temporarily broke me (its constituent phonemes are all valid/typical afrikaans (and dutch) but the word itself made no sense), then I looked it up

that sounds exhausting. I wish to flippantly suggest launching your colleague to another planet, but cruel fate might reveal them to be a muskovian martian so perhaps best not to broach that subject…

You have my sympathy! Is the worst part that you have to review the slop or its general presence at all?

Asking because at my workplace it will be allowed soon, and some coworkers are unfortunately looking forward to it, and I’m horrified, especially by the thought of having to do code review then…

It’s more the latter. I can’t really stop anyone from using it, and playing the game of “can you tell if this snippet of code was LLMed” is a fools errand, so I have to choose to ignore that part of it. Testing de-risks bad code, but there is never enough testing… well, there’s only so much I can do within my pay grade.

I’ve since asked this person to stop talking about their copilot usage, so this issue has been resolved, for now.

And on a less downbeat and significantly more puerile note, Dan Fixes Coin Ops makes a nice analogy for companies integrating ai into their product.

that thread is a work of genius and answers what the next tech boom needs to be

dicks in mousetrapsI MEAN whatever wastes electricity most, preferably with Nvidia cardsI do actually have a mechanism for using the sharp edges of NVidia cards for

dickmouse trapping purposes. And we could - hypothetically - use the extraneous power inputs to mine Bitcoin or something, maximizing efficiency!

when your skulls are both packed solid with wet cat food

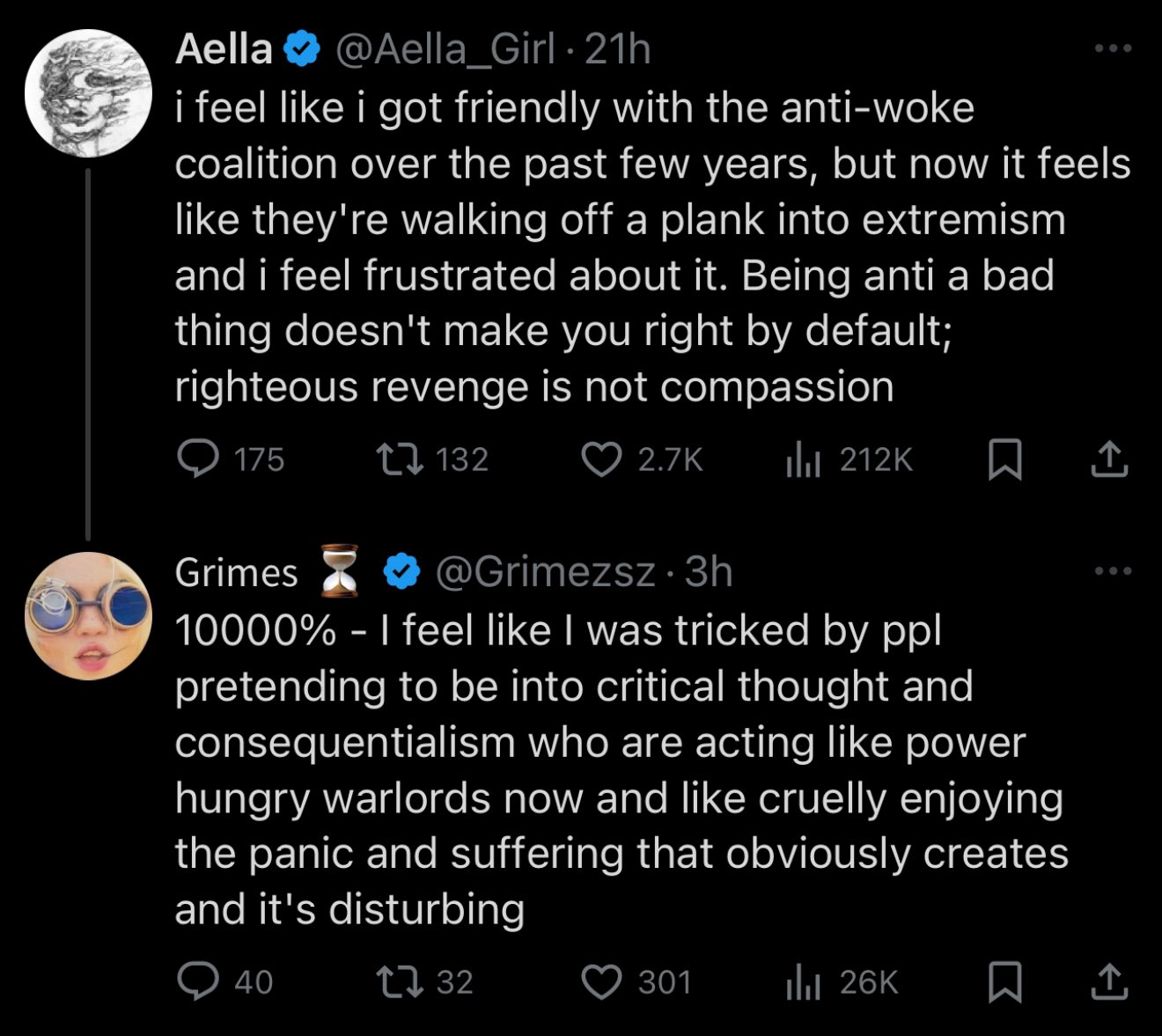

“Oh well, time to learn absolutely nothing from this and continue to be terrible people,” said Grimes and Aella mentally, and unbeknownst to them, because they each have one brain cell quantum-entangled* with the other’s, simultaneously

*I finished the three body problem trilogy recently! Where do I sign up for the ETO

aww, look at the little collaborators trying to pretend they both got duped instead of both having been active and enthusiastic enablers

b-but i thought they hated women rationally

I’d swear I’ve seen that exact same “realization” from Aella before, when she posted something like “I got really into tradcath practices for [the writer’s barely disguised fetish] reasons, and now I’m shocked that they really actually do hate sex work.”

Edit to add:

ok what the FUCK is goin on with the neo-trads? I was just over here enjoying this free life, individualism, subversive, unwoke cultural movement and I thought everybody was on board but suddenly BAM we’ve got a a bunch of them spawning into sex-negative tradcaths or whatever […] i’m just sad cause i thought this section of culture were my allies. we both were like ‘leave me alone, authoritarian government/culture’, and were appropriately skeptical of novel identity movements, willing to say the weirdo things.

Over on Bluesky, Mike Drucker sneers this as “German scientists in 1945 filling out their job application for Operation Paperclip”.

deleted by creator

Oh no it’s more US politics.

So as part of the ongoing administrative coup; federal employees have been receiving stupid emails from what everyone assumes is Elon Musk (since it’s the exact same playbook as the twitter firings). But they apparently royally flubbed up NOAA’s email security in the process so the employees are getting constant spam through an unsecured broadcast address.

Finally a thing Musk prob did all by himself, after the printout your code thing I assume his technical knowledge is firmly stuck in 1999.

lol holy shit this just came into reddit sneerclub (and was zapped immediately)

user HardboiledHack

also tried r/lesswrong and r/slatestarcodex

i’m sure the guardian can be trusted to report on anything involving trans people

the journalist is J Oliver Conroy https://www.theguardian.com/profile/j-oliver-conroy who now writes for the Washington Examiner and used to write for Quillette (the article has been deleted from the site) https://archive.is/aSBjW

from http://joliverconroy.net/

I am a journalist who specializes in features and profiles. I write about the American right, ideologues, intellectuals, extremist movements, the culture wars, true crime, and strange events and strange places.

by “about”, he means “for”

I’m a journalist at the Guardian working on a piece about the Zizians. If you have encountered members of the group or had interactions with them, or know people who have, please contact me: oliver.conroy@theguardian.com.

I’m also interested in chatting with people who can talk about the Zizians’ beliefs and where they fit (or did not fit) in the rationalist/EA/risk community.

I prefer to talk to people on the record but if you prefer to be anonymous/speak on background/etc. that can possibly be arranged.

Thanks very much.

i’ve also warned over at the old place

Do the Zizians fit in the “rationalist/EA/risk community”? Gosh and golly gee.

Yuddites and Zizians are a better example of the “narcissism of small differences” than any of the ones that Siskind propped up.

From what I’ve been able to piece together from the various theological disputes people have had with the murder cult it seems like the only two differences are that Ziz and friends are much more committed to nonhuman animal welfare than the average rat and that they have decided that the correct approach to conflict is always to escalate. This makes them more aggressive about basically everything which looks like a much deeper ideological gap than there actually is. I’m not going to evaluate whether these are reasonable conclusions to take from the same bizarre set of premises that lead to Roko’s Basilisk being a concern.

However, I do think that the unfolding of this story presents an object lesson in why “always escalate to the max” is a wildly stupid idea. It turns out that even when you have guns (metaphorical or otherwise) and a complete disregard for the consequences of failure the average group of citizens is still at a decided disadvantage to the state at higher points on the escalation ladder.

You misunderstand, they escalate to the max to keep themselves (including selves in parallel dimensions or far future simulations) from being blackmailed by future super intelligent beings, not to survive shootouts with border patrol agents.

I am fairly certain Yud had said something very close to that effect in reference to preventing blackmail from the basilisk, even though he tries to no-true-scotchman zizians wrt his functional decision ‘theory’ these days.

No nibbles here.

Terrible news: the worst person I know just made a banger post.